Luck? No! How Builders Manufacture the "Accidents" Outsiders Call Magic

The proven secret to engineering startup luck. Perfect predictability is blinding your team to a massive breakthrough. Unlock your momentum today.

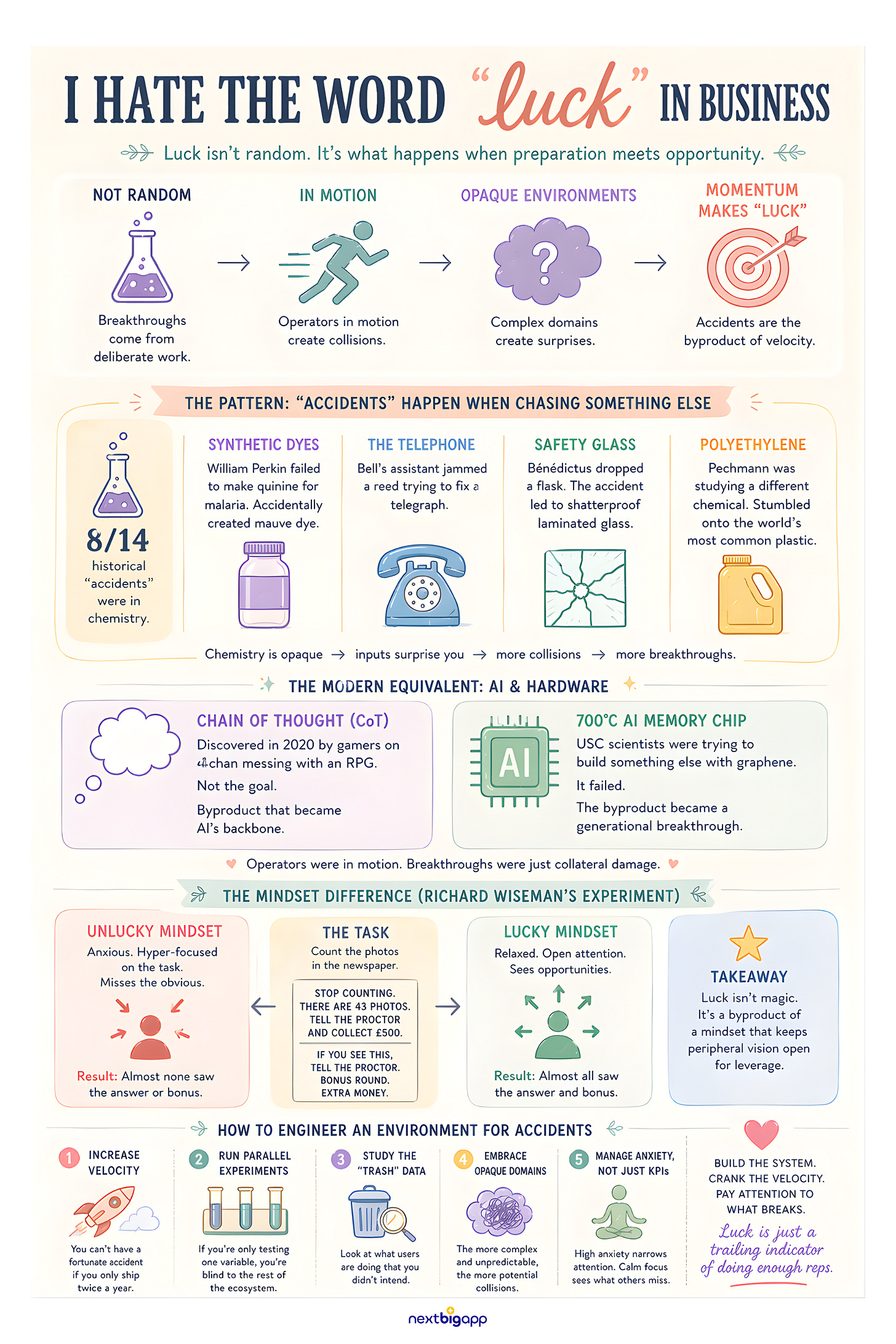

I hate the word “luck” in business.

Whenever a product or service achieves massive success, we instinctively start looking for the catch.

We tell ourselves things like, “They must be inflating their numbers”, or “They definitely gamed the system”.

Granted, those are purely cynical assumptions that aren’t even worth dwelling on, but I believe our most dangerous habit of all is writing off true success as a “happy accident”.

We never seem to forget about those “lucky discoveries” simply because they make for great headlines. We’ve all heard the stories: the spilled chemical that led to rubber, or the forgotten petri dish that gave us penicillin.

It’s a comfortable narrative.

It makes greatness feel random, like a winning lottery ticket which gives people an excuse for why they haven’t hit it big themselves.

But if you are a founder or an builder who actually ships product, you know that “luck” is a lie sold to outsiders.

You don’t just trip and fall into an unfair advantage. You engineer a system that moves so fast, a collision is inevitable.

Here is the reality check: Out of the 14 major “accidental” inventions between 1800 and 1970, 11 happened during deliberate, structured research.

They weren’t random. The breakthrough wasn’t the original goal, but the original goal forced the operator into motion.

You don’t engineer the accident. You engineer the momentum that makes the accident possible.

The Historical Pattern of “Failure”

If you look closely at history, accidental inventions share a distinct pattern: they happen when people are aggressively trying to build something else.

Synthetic Dyes: William Perkin accidentally created mauve dye and birthed the entire synthetic industrial chemical industry. Because he was failing to synthesize quinine for malaria.

The Telephone: Alexander Graham Bell’s breakthrough happened only because his assistant, Watson, jammed a transmitting reed while trying to fix a harmonic telegraph.

Safety Glass: In 1903, Édouard Bénédictus dropped a glass flask. It shattered but held its shape. Why? Because the liquid colloidon inside had evaporated, leaving a plastic film. He wasn’t trying to invent car windshields; he was just doing the reps.

Polyethylene: Hans von Pechmann stumbled onto the world’s most common plastic while investigating the decomposition of a completely different chemical.

The original data shows that 8 out of these 14 historical “accidents” were chemical inventions.

Why?

Because chemistry is opaque. You mix inputs, and the outputs surprise you.

Mechanical engineering is predictable; you can’t build a machine by accident. But in highly complex, opaque environments, doing the work generates massive secondary collisions.

The Modern Equivalent: AI and Software

Fast forward to today. The playing field has shifted from chemistry labs to codebases, but the pattern is identical.

The most opaque, unpredictable environment we have right now is Artificial Intelligence. And the “accidents” are piling up.

Take Chain of Thought (CoT) prompting. It is currently the backbone of how Large Language Models solve complex logic by “thinking” step-by-step. But it wasn’t initially invented by a boardroom of Google engineers planning out the future of AI.

It was accidentally discovered in 2020 by gamers on 4chan messing around with an RPG game called AI Dungeon.

They forced the AI NPC to “stay in character” and write out its problem-solving steps one by one.

By doing so, they accidentally realized the model could suddenly calculate correct mathematical answers. The academic papers formalizing it came two years later.

Or look at hardware. Just weeks ago, in March 2026, USC scientists built a revolutionary high-temperature memory chip that can survive 700 degrees and run AI matrix multiplications at record speeds.

How?

The team was originally trying to build a completely different kind of device using graphene. It failed. But the byproduct of that failure was a generational hardware breakthrough.

The operators were in motion. The breakthrough was just collateral damage.

The Cost of Perfect Predictability

Most businesses are obsessed with predictability. They want a guaranteed ROI for every hour of engineering and every dollar of marketing.

But if you optimize your business so heavily that you stamp out all variance, you simultaneously eliminate your surface area for serendipity.

Psychologist Richard Wiseman spent a decade studying why some people always seem to catch the right breaks.

Wiseman didn’t use a complex psychological evaluation, a genetic test, or an algorithm. When he started “The Luck Project”, he simply placed advertisements in national newspapers and magazines.

The ads essentially said: “Do you consider yourself exceptionally lucky or exceptionally unlucky? Contact us.”

Over 400 people responded. He categorized them entirely based on how they viewed their own lives.

The “Lucky” group were people who self-reported that good things just naturally happened to them. They believed they were in the right place at the right time.

The “Unlucky” group were people who self-reported that their lives were a constant string of bad breaks, missed opportunities, and failures.

He put these two groups in a room, handed them a newspaper, and gave them a strict KPI: Count the exact number of photos in this paper. Get it right, and you win £500.

Both groups started counting furiously. But on the second page, Wiseman had placed a massive, half-page ad that read:

“Stop counting. There are 43 photographs in this newspaper. Tell the proctor and collect your money.”

Every single self-proclaimed “lucky” person saw the ad, stopped, and got paid. Almost zero of the “unlucky” people saw it.

In the ending of the newspaper, there’s another half-page ad that says;

“If you see this, tell the proctor bonus round, you’ll get extra money.”

Same stats are true. All the lucky people saw it and almost none of the unlucky people saw it.

People who are lucky are open to possibilities. They’re focused but they’re relaxed. Where unlucky people are so focused on doing the right thing, they like miss the plot sometime.

Why?

Because the “unlucky” people went into the experiment anxious and convinced they had to grind manually to succeed, their anxiety created a narrow, laser-like focus. They were so stressed about executing the exact KPI (counting the photos) that they suffered from “inattentional blindness”.

The “lucky” people went in relaxed. They believed things would work out, which physically widened their attention and allowed them to see the half-page ad that the hyper-focused group completely missed.

It proves that “luck” wasn’t a cosmic force acting upon these people; it was a byproduct of their mindset.

If you manage your team with high anxiety and demand perfect execution on narrow KPIs, you are essentially training them to act like Wiseman’s “unlucky” group. They will hit the metric, but they will walk right past the breakthrough.

Unlucky people obsess over the manual labor; lucky people keep their peripheral vision open for leverage.

In my 20+ years building digital products, I’ve never seen a team stumble into an unfair advantage while sitting around a whiteboard trying to plot the “perfect” strategy.

Whether we were scaling apps to the App Store Top 10 or automating sales at Tap Grow, the biggest leverage points came from shipping a V1, putting it in the hands of real users, and noticing a weird anomaly in the data.

I’ve hit plenty of dead ends where a problem felt completely impossible. But if you mute the frustration and just keep shipping different variables, the wall eventually breaks. You quickly realize the “impossible” is rarely a law of physics, it’s usually just a lack of iterations.

How to engineer an environment for accidents:

Increase your deployment velocity: You can’t have a fortunate accident if you only ship twice a year.

Run parallel experiments: If you are only testing one variable, you are blind to the rest of the ecosystem.

Study the “trash” data: Perkin’s first attempt resulted in a black sludge that he could have easily thrown away. Instead, he investigated it. Look at the features your users are hacking to do things you didn’t intend.

Stop trying to plan the perfect breakthrough. Build a system, crank up your velocity, and pay attention to what breaks.

Luck is just a trailing indicator of doing enough reps.

This is a mindset shift.

To truly change how you see the world, you need to keep this message in sight and keep coming back to the ideas from the article.

So here’s a poster, you might want to hang it on your office wall. No need to thank me 😉

The Wiseman newspaper study is one of the better pieces of luck research out there. The practical translation for founders: high deployment velocity isn't just about speed — it's about surface area. A team that ships 10 experiments a quarter has 10x the collision opportunities of one that ships one 'perfect' launch. The 'lucky' companies aren't luckier. They just have more at-bats, and they're paying attention when something unexpected shows up in the data.

Thank you! I needed to see this today to encourage me in maintaining momentum.